Google rankings you track obsessively. Paid search performance you review every week. But when a shopper asks ChatGPT "what's the best eco-friendly water bottle under $50," you have no idea whether your brand comes up, or whether a competitor you've never heard of is getting the recommendation instead.

That blind spot is the problem this guide addresses. Here's how to build a clear picture of where you stand in AI-generated recommendations, what's driving it, and what to do when the numbers aren't where you want them.

What You're Actually Measuring

Your traditional analytics show you what happens after someone lands on your site. AI visibility tracking shows you what happens before: the conversation that determines whether your brand ever gets considered at all.

When ChatGPT answers a shopping question, it typically names three to five brands. If yours isn't one of them, that potential customer moves on without ever knowing you exist. There's no page two. There's no second chance. Understanding where you sit in those responses, how often you appear, what language is used to describe you, and who else is consistently mentioned alongside you gives you the raw material to actually improve.

Start With the Right Prompts

The temptation is to track your own brand name. Resist it. You'll almost certainly appear when someone searches specifically for you. That's not the gap worth measuring.

What matters is how you perform on the queries your customers ask before they've picked a brand. Think about the questions your support team fields, the language people use in reviews, the problems your product solves. A good tracking prompt sounds like a real shopper talking: "What's the best water bottle for keeping drinks cold on long hikes?" or "Which sustainable kitchenware brands are worth buying from?" Not "best water bottle" or "top brands in the world."

Build a list of ten to fifteen prompts that represent how real buyers in your category talk when they're in research mode. Those are the conversations you need to be part of.

Test Across Platforms: They Don't All Agree

ChatGPT, Gemini, Claude, and Perplexity all draw on different training data and weight sources differently. A brand that ranks consistently in ChatGPT responses might barely appear in Gemini, and vice versa. ChatGPT alone has over 700 million weekly active users as of mid-2025,1 making it the highest-priority platform for most brands, but Gemini's deep integration with Google Search means it influences a distinct and growing audience.

Running your prompts across multiple platforms takes more time, but it gives you an honest picture of where you actually stand versus where you assume you stand.

The Metrics That Tell the Story

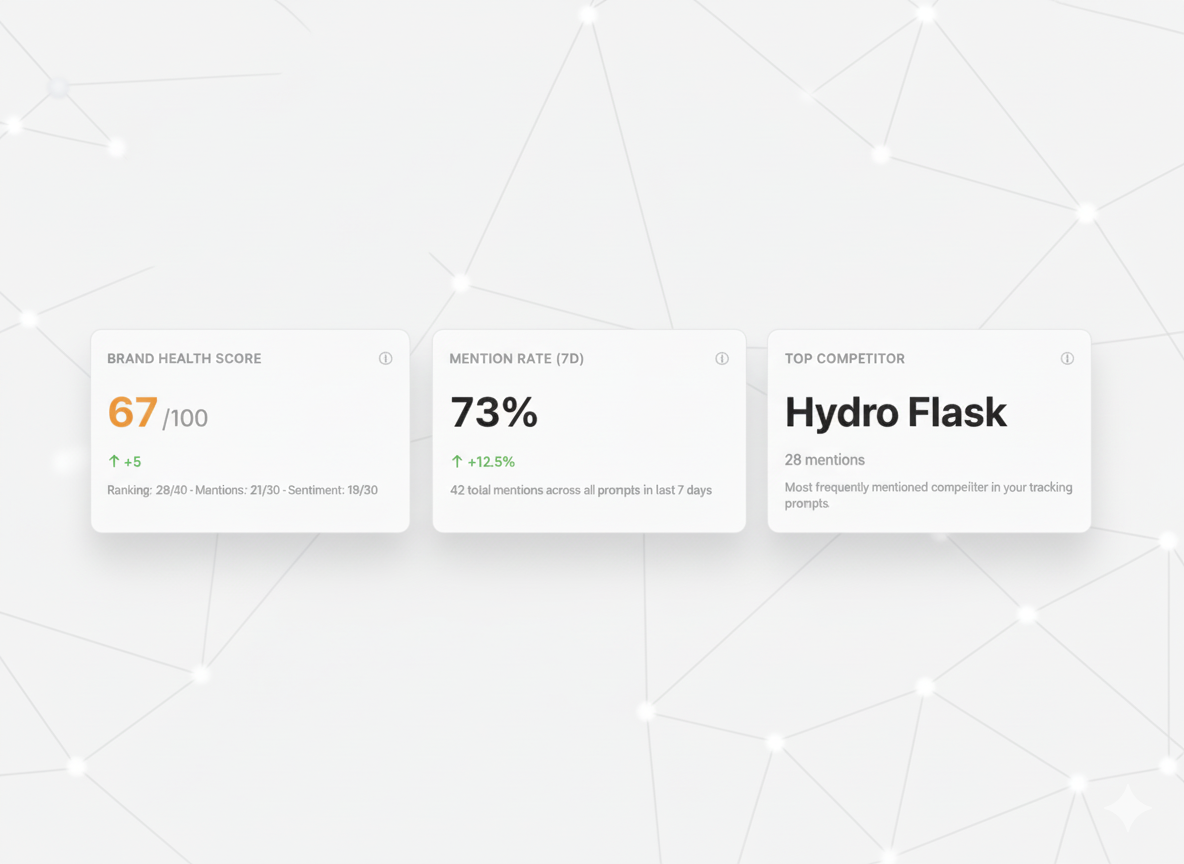

Once you're running queries consistently, four numbers matter most.

Mention rate is the most fundamental: out of all the times you run your tracking prompts, what percentage of responses include your brand? If you're testing ten queries weekly and appearing in three responses, your mention rate is 30%. That's your baseline, and everything you do from here is aimed at moving it.

Average ranking position tells you where in the response you appear. Being named first carries far more weight than being the fourth brand mentioned after the AI has already given detailed explanations of three competitors. Track both whether you appear and where.

Sentiment is easy to overlook but important. AI platforms don't just name brands. They describe them. "Known for exceptional build quality" and "some users report durability issues" are both mentions, but they do very different things for your conversion rate. Read the actual language being used about your brand, not just whether your name appears.

Competitor co-mentions show you the real competitive landscape in AI responses, which often doesn't match what you'd see in traditional search. You may find brands appearing consistently alongside you that you've never benchmarked against, or discover that a competitor you thought was minor is getting mentioned first in the queries that matter most to your category.

Get a Baseline Before You Do Anything Else

Before you change a word of your content or rebuild your llms.txt, run your full prompt list and record what you find. Your mention rate, your average position, the three brands that appear most often alongside you, the language used to describe you. Write it down. This is your before picture, and without it you have no way to know whether what you do next is actually working.

The free AI visibility calculator is a fast way to get that first snapshot across ChatGPT and Gemini without setting up anything manually.

What to Do With What You Find

Low mention rate usually means the web doesn't have enough clear, consistent information about your brand for AI models to confidently recommend you. The fix is less about tricks and more about fundamentals: better product documentation, more third-party coverage, and more detailed reviews on the platforms AI models pull from.

Ranking below competitors on queries you should own typically points to a positioning problem. Look at what the AI says about the brands ranking above you: the language it uses, the attributes it highlights. Then look at whether your content makes those same attributes clear and easy to find. Often the gap isn't that competitors are doing something sophisticated; it's that their product pages directly answer the questions AI models are trying to answer, and yours don't.

Neutral or vague sentiment, like descriptions of "a popular option" with no specifics, usually means AI models don't have enough differentiated information about you to say anything more concrete. The solution is to give them something to work with: specific claims, backed by evidence, that appear consistently across your site and in third-party sources.

Manual Tracking vs. Automated Tracking

Running ten prompts across four platforms once a week is manageable when you're starting out. It takes time, but it builds intuition about how AI models respond to your category, and that intuition is valuable. Do it manually for a few weeks before you automate anything.

At scale, though, manual tracking breaks down fast. Inconsistency creeps in, prompts get skipped, and you lose the longitudinal data that makes trends visible. That's where LLMFriendly.ai fits in. It runs your prompts automatically across ChatGPT and Gemini on a consistent schedule, tracks your ranking position and competitor co-mentions over time, and surfaces changes so you can act on them rather than just observe them.

The Mistake Most Brands Make

They check their AI visibility once, note that it's not great, and then go optimize their content, without ever checking again to see if anything changed. AI models update. Competitors move. Your own content authority grows. A snapshot from three months ago tells you almost nothing about where you stand today.

Weekly tracking isn't paranoia. It's the minimum cadence needed to catch shifts early enough to respond to them. The brands winning in AI-mediated discovery aren't necessarily the ones with the best products. They're the ones paying close enough attention to know when something changes and act quickly when it does.

References

- MarketMaze. (2025). ChatGPT Search Surge: AI Reshapes Ecommerce in 2025

Automate Your AI Visibility Tracking

Track your brand across ChatGPT and Gemini automatically. Get weekly reports on rankings, competitor intelligence, and AI visibility trends.

Start Tracking on Shopify